Datawisp DevUpdates | February 2023 - remote querying, multi-aggregate, postreSQL data export

Here are some of the top DevUpdates for the month of February 2023 - Follow us on Twitter and LinkedIn for more DevUpdates!

The past month has been super exciting! Onboarding two new developers in January has made all the difference.

We’ve made tons of progress on the product this month, so let’s start with the big stuff:

Remote Querying

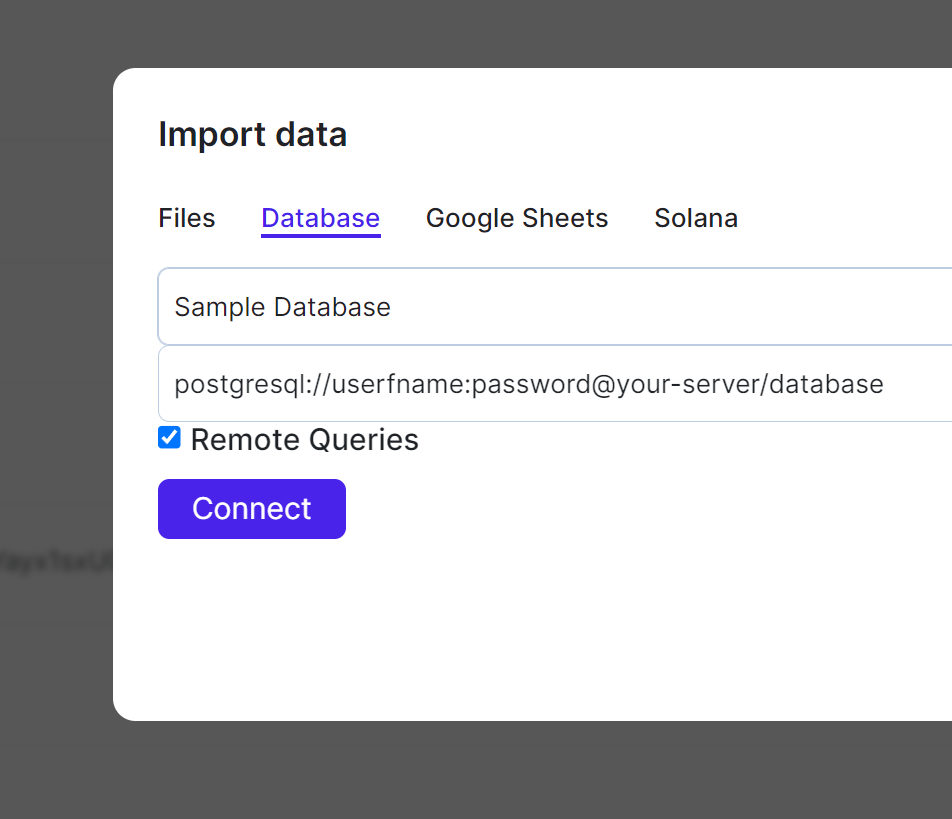

Most of your data (at least, by size) is likely already stored in some smart database system. Be that Snowflake, PostgreSQL, MySQL, Google BigQuery, or any one of the many other systems out there.

If you want to analyze your data, it’s not feasible to copy all of it in its entirety to our servers - we want to run as much of the computation as possible on your existing infrastructure. That’s cheaper for both you and us, as no expensive data transfer fees have to be paid. It’s also much faster.

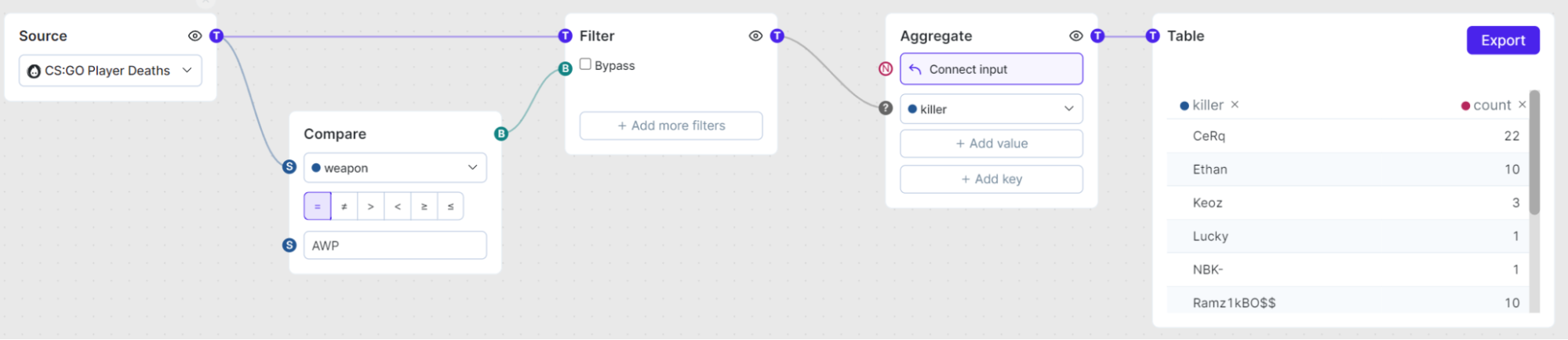

With remote querying, we find the part of the query that can run on your infrastructure, and simply run it there. For example, let’s say you had the following query:

If you want to run this on your PostgreSQL database, we can now just compile everything, including the Aggregate block, to SQL.

To enable this feature, just make sure to check the “Remote Queries” checkbox when importing data - the rest is automatic!

Remote Querying is currently in beta, so if you have any issues or feedback, please let us know! It’s currently supported for MySQL and PostgreSQL, but we’re always adding more. If you need a different database supported, just ask!

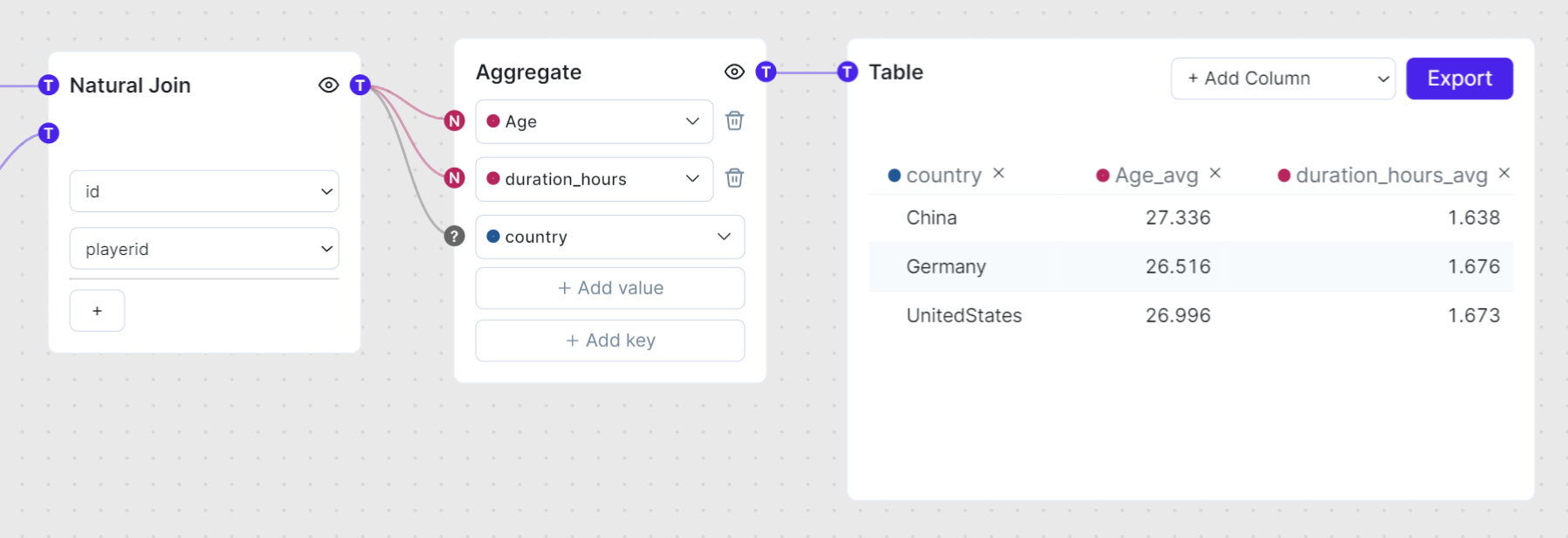

Multi-Aggregate

Early in the life of Datawisp we made a decision - you can only aggregate one column at a time. So, if you want the sum/min/max or average of more than one column, you’d need multiple aggregate blocks, and join them together.

This decision was half based on technical limitations of the time, but also we thought it was better UX. After using it like this for a while, we’ve decided it’s not.

The aggregate block can now take multiple values:

So, if for a given country, I want to know the average age of a player and how many hours they play, it’s now one block instead of five!

Note - the UX for this is still work-in-progress, and it’ll be overhauled with the coming lego blocks update.

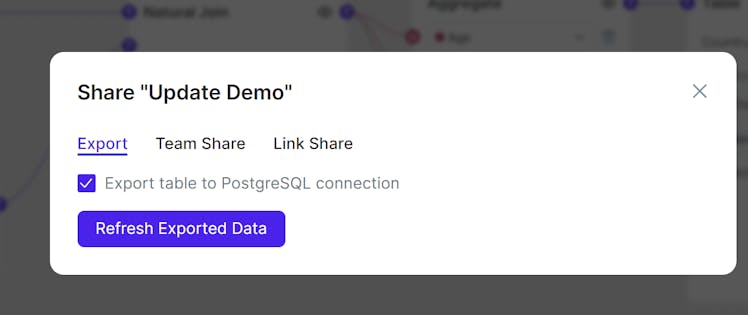

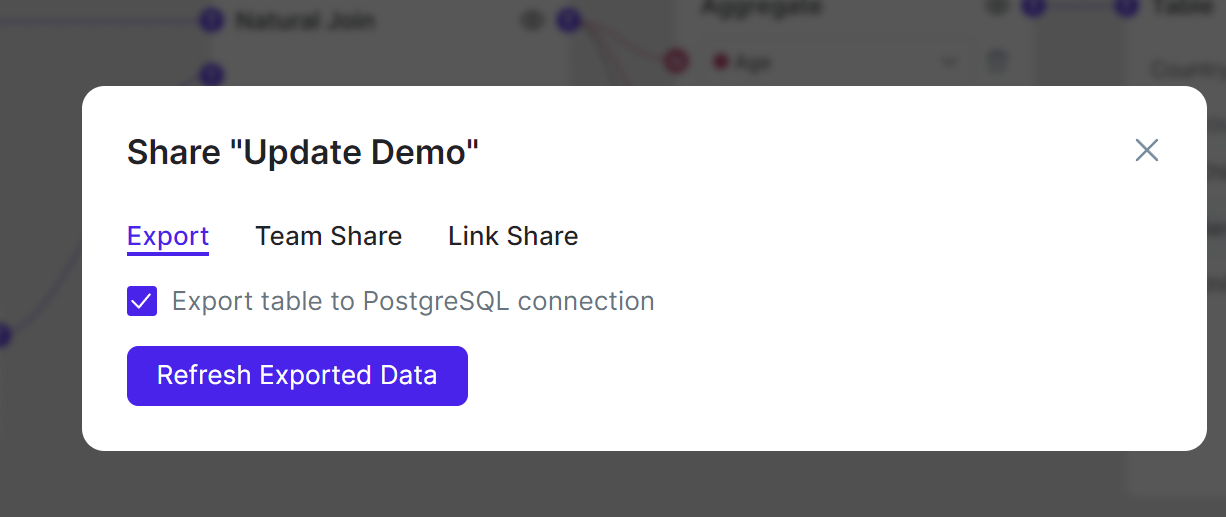

Data Export

Too many analytics platforms hoard your data. We think you should always be able to use your data as you see fit - for use in your application, with existing dashboards, or with other visualization methods. To solve for this, we’ve built functionality for postgresql export to ensure that you can always get your data out of Datawisp as easy as you can import it.

If the feature is enabled on your account (talk to us!), you can easily export tables to a postgres server of your choice.

Smaller Stuff

Of course, we made lots of smaller improvements too.

We’ve significantly improved the performance of moving blocks around. It should stutter less now, especially if you have lots of sheets open at the same time.

When you create a public sharelink, it now remembers what part of the sheet you were looking at. That’s really useful when you want to share a chart or table with key insights with your community.

Tables can have names now, which enables a lot of useful functionality - from sharing with others, downloads, importing data from another sheet and the postgres export.

You can upload much bigger files now! The old limit for CSVs was around 20 MiB - it’s now around 500MiB. Note - the limit is more of a sanity check than a technical limitation, so if you need more, ask for it!

Bug Fixes

We fixed lots of small bugs. Here are a few of them:

Sometimes, the concat block produced special null values that the rest of our database engine didn’t really understand. It now should deal with null values like the rest of our engine.

The solana import failed on some programs. Now it doesn’t!

If you had two columns with the same text written in different casings (e.g. Spongebob and SpOnGeBoB), one of them would miss data in some circumstances. That’s not ideal. So, now this should work!

The MySQL import got some polishing, and now works better than ever.

The Future

We’re nearing the end of the closed beta of Datawisp, so the next month is all about wrapping that up. That said, we have a couple very exciting features in the pipeline!

This is by no means everything we’re currently working on, just some examples:

AI!

You’ve heard of ChatGPT. Well, we’re applying generative ai to help you analyze your data. Look out for a demo and announcement soon, because we have a working prototype!Importing data from other sheets

Big sheets can sometimes get a bit complicated. So, we’ll allow you to export data from one sheet, and use it in a different sheet. This should make it very easy to organize your data better.Ethereum Data

Soon, you’ll be able to query all the ERC-20 and ERC-721 tokens on both the ethereum and polygon blockchains. So, all your fungible and non-fungible tokens can be analyzed to your heart’s content!

I hope this gave you some insight into what we’re doing at Datawisp.

As always, we absolutely love feedback, so please reach out!

__

Footnotes

Well, not that I know of. I haven’t tried all of them.